Today's automation landscape is experiencing revolutionary trends—revolutions, one might say, that the IT industry has long been familiar with but are still new to the field of automation. This convergence of Information Technology (IT) and Operational Technology (OT) is best illustrated with practical examples.

(Cover © Gorodenkoff/stock.adobe.com)

This article by Christofer Dutz was first published in the April 2024 issue of Computer & Automation journal, following an invitation from WEKA Business Medien. The original article can be accessed at the end of the text.

Many manufacturing companies are currently in a position reminiscent of the IT sector 15 years ago. As a result, these companies are increasingly adopting IT project methodologies, with Scrum being the most prominent. However, in the OT world, it is uncommon for a company to switch to Scrum overnight. Instead, companies tend to implement aspects of Scrum incrementally. The reasoning behind this is that teams are attempting to adopt more agile methods to avoid extensive upfront planning and to start implementation sooner. However, management often insists on fixed deadlines, specific functionality, and guaranteed support availability.

The problem is that you either work agilely or you don't. There is no such thing as being semi-agile because agile projects rely on numerous techniques and methods, each with its own justification. Omitting some of these disrupts the whole concept.

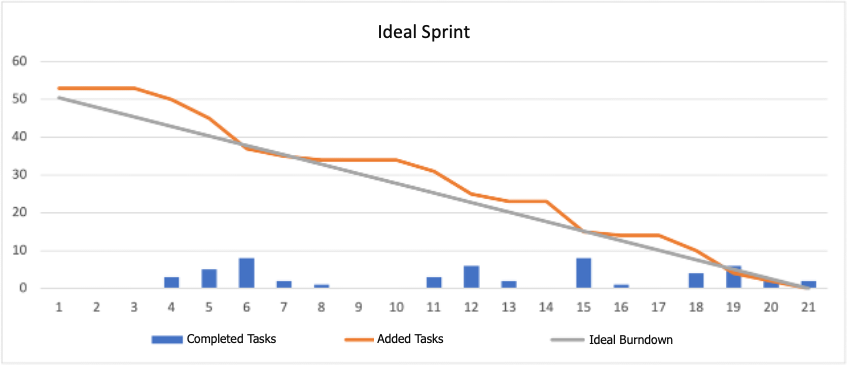

For instance, in the automation world, a Scrum team's goal at the end of a sprint—usually a two to three-week phase—is for the sprint burndown chart to hit zero, indicating that all planned tasks were completed. Scrum does not eliminate planning; it merely shortens the planning periods.

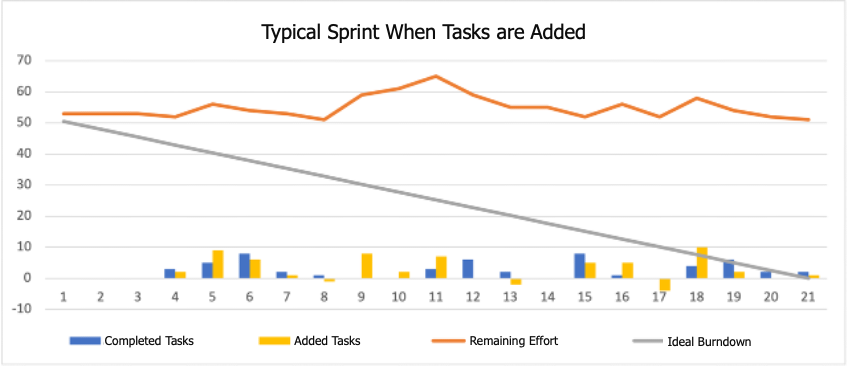

Unfortunately, the line often remains flat or, in many cases, rises towards the end of the sprint. A flat line suggests that either no work was done—which is unlikely—or that as much work was added during the sprint as the team could complete. An increasing workload indicates that more tasks were added than could be realistically completed, undermining the sprint's goals.

The challenge is that sprint planning is meant to precisely define what the team will do next. After this, the sprint is "frozen", and the team focuses on execution. Adding tasks mid-sprint is seen as a "cardinal sin". Scrum’s solution for dealing with significant issues that arise suddenly is to cancel the current sprint and plan a new one.

Often, the justification given for adding tasks is, "The system is down; we need to fix these issues immediately." This is understandable and highlights a fundamental problem: Scrum might not be the right methodology for tasks like support. In such cases, other agile methodologies like Kanban are more suitable, although Kanban also has its weaknesses in traditional project management.

One approach that can work is having one team handle two projects simultaneously—one using Scrum for product development and the other using Kanban for support. Team members work on both projects according to their usual workload, allocated proportionally to each project. If an employee typically spends two days a week on support, they would be assigned 40% to the Kanban support project and 60% to the Scrum project. This setup maintains some predictability for the development project while ensuring that system downtimes are managed.

Automated Testing and Simulation: The Lag in OT

In testing, the automation sector is far behind IT—possibly by 15 to 20 years. The main problem in OT is the reliance on actual hardware; sometimes, equipment must be built first. Automation tool manufacturers have only recently begun to address the lack of established tools for automated testing and simulation.

To my knowledge, no engineering environment exists where "testing" is a fundamental part. There are some rudimentary testing methods available, but their maturity is far from that found in IT solutions, where testing is a critical pillar of quality assurance and reflected in the development tools and the range of available tools.

However, some progress is being made, with the first solutions that allow a simulated PLC to test a simulated system, although these are not yet in use. A common refrain is, "We don't have time or money for testing, or both." Practically, it's often possible to negotiate for a PLC for testing purposes, but these are usually the first to be reassigned when there are hardware delivery issues and they are needed elsewhere.

Testing Efficiency: A Critical Evaluation

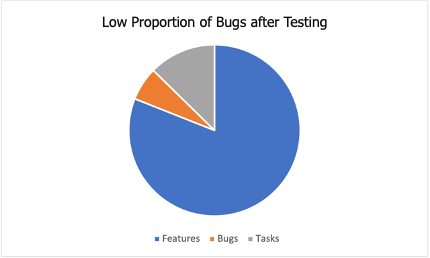

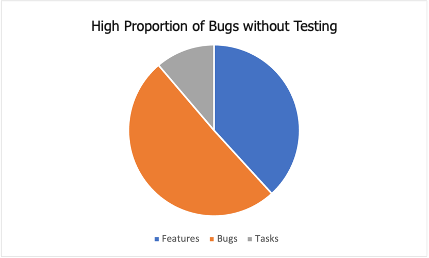

The efficiency of testing is often subjectively and thus mistakenly assessed. A practical example involves a project where the author was engaged. The project's Scrum team faced criticism for being less productive than others, specifically accused of spending too much time on testing compared to teams that processed more tasks within the same timeframe.

However, a detailed analysis revealed a different story. The Scrum Master documented that while the criticized team spent about 5% of their time fixing bugs, other teams averaged 45% of their time on such corrections. This clearly demonstrated that the team investing in thorough testing was actually far more productive in the long run.

The takeaway here is clear: although testing may appear costly at first glance, its long-term benefits in terms of reducing time spent on fixes and rework always prove to be worth it.

The Use of Cloud: A Cautious Migration

Another current trend spilling from IT into the world of automation is the drive to migrate to the cloud, particularly in areas like Cloud-based Manufacturing Execution Systems (MES) and Programmable Logic Controllers (PLCs). Storage and computing power in the cloud are theoretically almost unlimited, but they are not free. Nonetheless, such solutions appear attractive to some users, likely because the skills needed to operate a local big data and machine learning cluster are simply not present in many companies. When using the cloud, several cost factors come into play, which are different from traditional data processing and must be considered:

Internet or Cloud Connection Costs: It's often necessary to block initiatives that would move all production data to the cloud early on, because the calculations show that it would not be feasible to provide the necessary internet connection, or the costs would be disproportionately high.

Storage and Operation Costs: If a company rents a server from any cloud provider and operates it 100% of the time, it will always be more expensive than running the same server locally. The likely exception is virtual servers, where several virtual servers share the capacity of a real server. Here, companies can save money with the cloud, as they avoid the costs of procuring and setting up specially trained personnel.

Transfer Costs: Cloud providers typically charge for data transfer. If a company works a lot with its data, the transfer costs also increase. What many forget is that the longer a company pumps data into a cloud provider's storage, the more expensive it becomes to switch providers. If a company decides to change providers in the future, this can be very costly. It remains to be seen how this will evolve: just a few weeks ago, Google made headlines with a marketing stunt announcing that transfer costs for cloud transfers had been abolished.

Currently, in the IT world, some companies are finding their cloud costs unsustainable, leading them to reconsider and sometimes retract their data centers back in-house. There are also now IT service providers that specialize in optimizing cloud costs for companies. Moreover, actions like those by Microsoft have made companies take a closer look at cloud issues: a few weeks ago, Microsoft's announcement of discontinuing their Azure IoT Central service caught some companies unprepared. Initially, it seemed they would be forced to redevelop a critical part of their solutions within two months. Microsoft later backtracked and labeled the announcement as misinformation. However, this has shown many companies how dependent they have become on cloud providers. Therefore, it is always advisable to ensure that one is not too reliant on a single cloud provider's offerings. Only then can one react flexibly in such situations. It is foreseeable that a certain cloud disillusionment will set in automation and that the storage and processing of data will find its way back into companies, at least in part.

Conclusion: Learning from IT

The disruptions currently shaking the world of automation are not new; other industries, especially IT, have already navigated these changes. Unfortunately, many automation projects are currently making the same mistakes that were observed in IT years ago. Automation could learn from another industry—it just needs to do so.

Two suggestions are agility and testing:

Consulting with IT experts to develop tailored solutions and provide appropriate training could prevent costly and lengthy errors.

Testing needs significant improvement. The industry should leverage open-source initiatives to collaborate on solutions that benefit all players—a strategy that could mitigate the high costs and individual challenges of developing proprietary systems.

References

Google: Cloud switching just got easier: Removing data transfer fees when moving off Google Cloud

Microsoft: Microsoft's commitment to Azure IoT